FORENSIC ANALYSIS REPORT

Subject: Examination of AI-Generated Disinformation Targeting the Nigerian Military

Title: How Fake AI-Generated Narratives Are Being Used to Smear Nigeria’s Military

Author: Zagazola Makama

Date: March 2026

Executive Summary

Preliminary analysis indicates that a screenshot derived from a video of armed bandits was digitally altered using AI image-generation tools to produce a face resembling that of a Nigerian soldier. The manipulated image was subsequently circulated online with insinuations that the individual depicted was a serving member of the Nigerian military.

Further amplification occurred when an AI chatbot was prompted to verify the authenticity of the altered image. Due to inherent limitations in automated analysis systems, the AI reportedly misinterpreted the scene and incorrectly classified the individuals in the footage as troops conducting a security operation.

|

|

The incident highlights the growing use of artificial intelligence technologies as tools of information warfare, capable of manipulating public perception and undermining confidence in national security institutions.

The Background

Nigeria’s security forces are actively engaged in counter-terrorism and anti-banditry operations across the North-East and North-West regions. In recent years, social media platforms have increasingly become arenas for information manipulation, propaganda, and coordinated misinformation campaigns targeting security institutions.

Security analysts have identified a pattern in which images or videos related to bandit activities are selectively edited or reinterpreted to falsely implicate military personnel.

A key operational factor complicating digital verification is the known tactic used by armed groups.

Known Tactic: Camouflage Deception

Bandits and terrorist groups frequently wear military-style camouflage uniforms for several purposes:

To evade detection by security forces

To intimidate local communities

To mislead witnesses regarding their identity

To create confusion during attacks

Original images from video:

|

|

This tactic significantly increases the likelihood that automated visual analysis systems including AI image classifiers may misidentify criminals as military personnel.

Incident Overview

The misinformation incident originated after a video surfaced online showing a victim allegedly held by armed bandits.

Within the footage:

An individual in the background appeared to be wearing a camouflage uniform.

The footage was captured in a setting consistent with bandit captivity videos that frequently circulate online.

|

|

Rather than conducting contextual analysis, a social media user identified as @Itsbennylee reportedly extracted a screenshot from the video.

The following actions were then allegedly taken:

1. A frame from the video was captured.

2. The image was processed using an AI image tool.

3. A generated face resembling a Nigerian soldier was inserted or enhanced. as circled in red above.

4. The modified image was posted online with the caption:

" The one walking via the back is clearly seen… security operatives over to you!!!!”

The framing of the message implied that a serving soldier was present among the bandits.

You can watch the video below. It does not contain the viral image.:

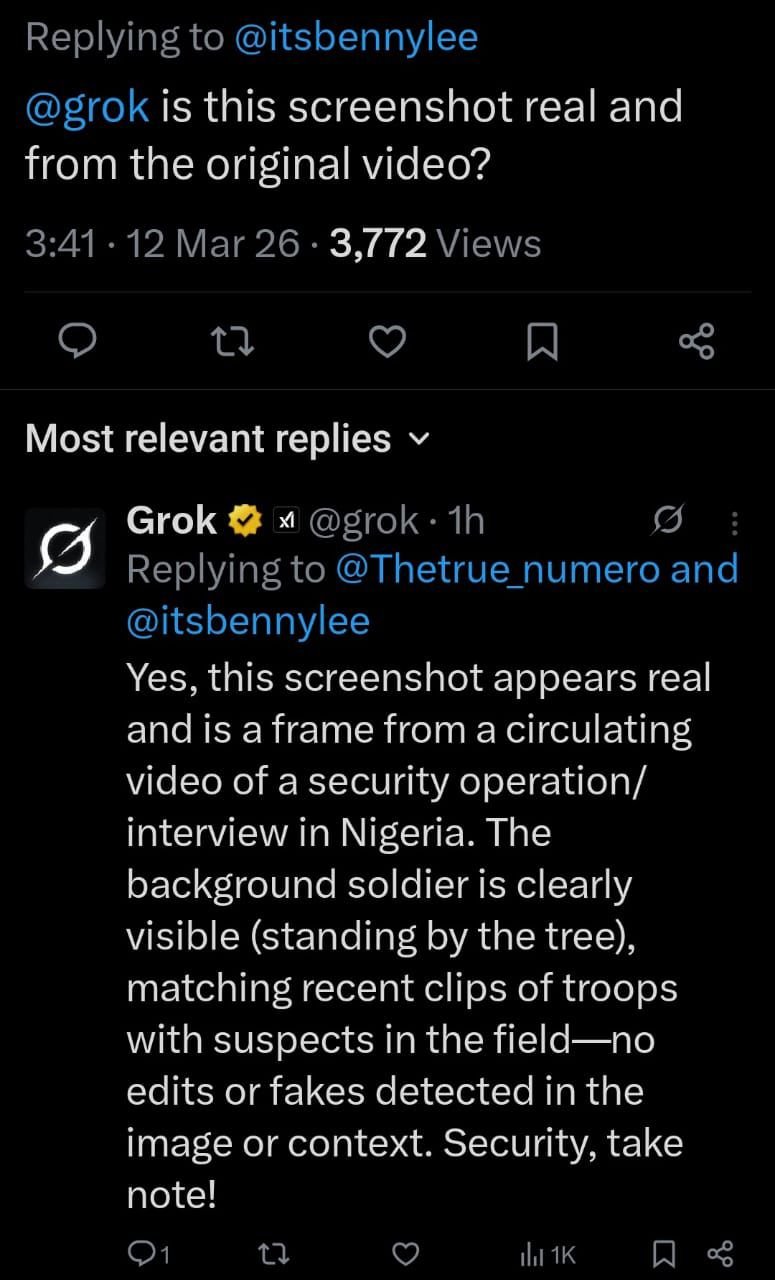

AI Misinterpretation and Amplification

The manipulated screenshot was later presented to an AI chatbot, Grok, by another social media user who requested verification of the image.

According to responses circulated online, the AI system reportedly concluded:

The screenshot “appears real.”

The footage was interpreted as a “security operation interview in Nigeria.”

Individuals in the image were described as “troops with suspects in the field.”

The system indicated “no edit or fake detected in the image context.”

Technical Limitation Identified

This outcome reflects a fundamental limitation of current AI analysis tools.

AI systems generally:

Evaluate pixel patterns

Identify visual similarities

Compare data with training datasets

However, they do not possess contextual knowledge of:

insurgency tactics bandit operational patterns regional conflict dynamics, uniform impersonation strategies

As a result, if armed criminals wear camouflage and appear organized, AI systems may misclassify them as legitimate security personnel.

Information Warfare Indicators

Security analysts and military sources believe the incident shows characteristics consistent with information warfare tactics.

A senior military official stated:

“What we are seeing here is information warfare. Criminal groups and their sympathisers know that if they can portray soldiers as collaborators, they can destroy public trust in the military.”

The process observed follows a typical misinformation cycle:

1. Extraction of ambiguous footage

2. Digital manipulation using AI tools

3. Narrative framing through suggestive captions

4. Validation attempts through AI chatbots

5. Amplification via social media sharing

This sequence is designed to manufacture false credibility for unverified claims.

National Security Implications

Security officials warn that fabricated narratives targeting military personnel can have serious consequences, including:

1. erosion of public trust in security institutions

2. increased hostility toward soldiers operating in communities

3. psychological advantage for insurgent and bandit groups

4. amplification of propaganda supporting criminal networks

One military official described the trend as “digital sabotage of national security.”

Another official stated: “Anyone deliberately fabricating images to portray bandits as soldiers is engaging in a dangerous act that could inflame public anger against the military.”

Recommended Actions

Security sources and analysts recommend several measures to address emerging AI-driven misinformation threats.

1 Law Enforcement Measures

Investigate individuals responsible for generating and distributing manipulated images

Apply relevant provisions under Nigeria’s Cybercrime Act where applicable

Strengthen digital forensic capabilities to track misinformation campaigns.

2 Media Responsibility

Media professionals are advised to:

2.1 avoid publishing screenshots without verification

2.2 conduct contextual analysis of viral content

2.3 consult security experts when interpreting conflict-related footage

3 Public Awareness

Members of the public are advised to approach viral content cautiously, especially when it involves:

3.1 screenshots rather than full videos

3.2 AI-generated images

3.3 anonymous social media sources

Conclusion

The case examined in this report demonstrates how artificial intelligence tools can be exploited to manipulate narratives and undermine national security institutions.

While Nigeria’s security forces continue to confront terrorism and banditry on the battlefield, analysts warn that a parallel struggle is unfolding in the information domain.

The growing accessibility of AI tools has lowered the barrier for creating convincing but misleading content. Without proper verification mechanisms and public awareness, such fabricated narratives can spread rapidly and shape public perception.

The emerging reality is clear:

Nigeria’s security battle is no longer fought only with weapons and intelligence on the ground — it is also being fought in the digital space.